Artificial intelligence has rapidly entered our classrooms, our assignments, and our students’ daily lives. As a result, many educators are asking a question that does not yet have a clear answer: What is Responsible AI?

Despite the growing presence of AI in education, there is not a single group or organization that has clearly defined what responsible AI use in the classroom should look like. Districts are beginning to create policies, but those policies vary widely and are often still evolving. Some schools attempt to restrict AI entirely, while others encourage experimentation.

In many cases, teachers are left trying to figure it out on their own.

Fortunately, educators are not starting from scratch. Our profession is built on responsibility. Every teacher manages a wide range of duties: lesson planning, instruction, assessment, differentiation, classroom management, student safety, communication with families, collaboration with colleagues, and professional growth. Within all of these responsibilities lies an important principle that guides our work—we strive to do no harm to our students.

It is within this framework of professional responsibility that we can begin to define responsible AI use.

When teachers ask, “Can students use AI?”, the concern is rarely about the technology itself. The deeper worry is about cheating, the loss of critical thinking, and the fear that AI will lead to a “dumbing down” of learning. These concerns are understandable. If students rely on AI to produce answers without understanding them, then learning is diminished.

However, banning AI entirely ignores an important reality: students are already using these tools. Instead of asking whether AI belongs in the classroom, a better question might be: How can we teach students to use AI responsibly?

In my own classroom, I try to frame AI as a thinking partner rather than a thinking replacement.

For example, in my Introduction to Computer Science class, students build an app that generates a Mad Lib story. Before AI tools were widely available, some students struggled with this assignment—not because they couldn’t code the program, but because they struggled to write the creative story needed for the Mad Lib.

The purpose of the assignment is not to measure creative writing ability. The goal is to practice using code to solve a problem.

When students use AI to help generate ideas or prompts for their Mad Lib, the barrier of creative writing begins to disappear. Students who may have struggled with storytelling can now focus on the real objective of the assignment: using code to solve a problem. In this case, AI supports learning rather than replacing it.

At the same time, responsible AI use requires students to question the technology itself.

In another activity, I intentionally use AI image generation tools to explore bias in AI systems. Students generate images of people in different professions such as doctor, lawyer, nurse, or janitor. The results often reveal patterns: certain genders or races appear more frequently in certain roles.

These moments create powerful classroom discussions. Students begin to see that AI is not neutral; it reflects the data it was trained on and the biases embedded within that data. Responsible AI use means recognizing that AI outputs should not simply be accepted as truth.

Experiences like these have shaped how I think about responsible AI in the classroom.

Responsible AI use is the process of using the power of AI to think critically, gain insight, and bridge the gap between knowledge and understanding while maintaining human judgment, transparency, ethics, and accountability.

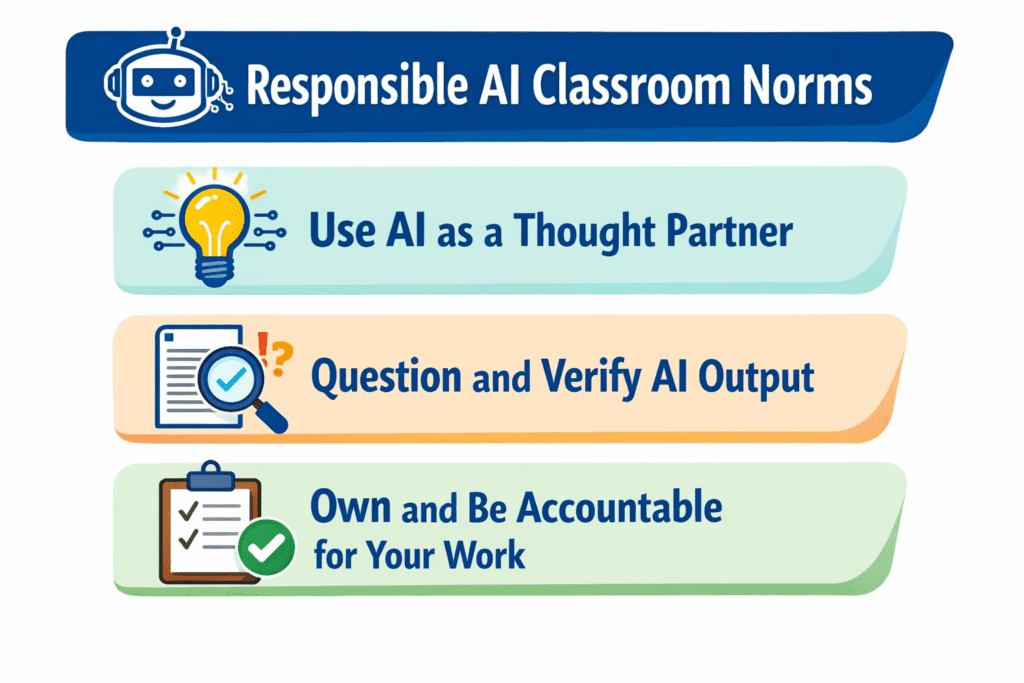

In practice, this means helping students develop a few simple habits when using AI:

Use AI as a thought partner, not the producer of all thought. AI should help students brainstorm, explore ideas, and ask better questions—not replace their own thinking.

Do not blindly copy AI output. Students should evaluate, revise, and verify what AI produces. Just because an AI generated it, it does not mean it is correct.

Own your work and remain accountable. Students should be transparent about when and how AI was used and remain responsible for the final product they submit.

Another misconception surrounding Responsible AI is the belief that we can continue teaching and assessing student knowledge in exactly the same ways we have for years. AI changes the landscape of learning. Assignments that once measured recall may now require deeper thinking, creativity, or application to remain meaningful.

This is where computer science educators can play an important role. In many schools, CS teachers are viewed as technology leaders. By sharing classroom experiences and insights about AI, we can help guide conversations with colleagues, administrators, and policy makers. Responsible AI policies should not be created in isolation—they should be informed by the realities of teaching and learning. Ultimately, Responsible AI will not have a single universal definition. What responsible use looks like in a computer science classroom may differ from what it looks like in a history, math, or art class.

But if we anchor our thinking in the responsibilities we already hold as educators—to support learning, promote critical thinking, and act in the best interests of our students—we have a strong starting point.

Responsible AI does not begin with technology.

It begins with teachers.

About the Author

Matt Alonzo is a 22-year veteran mathematics teacher in his 14th year of teaching computer science at Parkway North High School in St. Louis, Missouri. A lifelong learner, he continually seeks opportunities to grow in CS education while centering his work on equity. As a member of cohort 5 of the CSTA Impact Fellowship (formerly the Equity Fellowship), Matt gained a deeper understanding of the potential harms AI can pose to students, particularly those from underrepresented backgrounds. This experience drives his excitement for the CSTA Responsible AI Fellowship, where he hopes to shape how AI is used in education. Matt also serves as equity chair of the CSTA Missouri chapter, was the 2023 CS Teaching Excellence Award winner, and was Parkway North’s 2022–23 Teacher of the Year. In addition, he is in his ninth year on his local school board, currently serving as president.